Challenges in Machine Learning: Complete Guide for 2025 to 2030

Machine learning powers everything from your smartphone recommendations to autonomous vehicles. You see its impact daily, yet the technology faces obstacles that prevent many organizations from achieving their goals. The global machine learning market reached $113.10 billion in 2025 and projects growth to $503.40 billion by 2030. Understanding these challenges helps you navigate implementation successfully.

Professionals across industries encounter similar roadblocks. Data scientists spend hours cleaning messy datasets. Companies invest millions in infrastructure only to see models fail in production. Teams struggle to explain how their algorithms make decisions. Each challenge demands specific solutions, and recognizing them early saves time and resources.

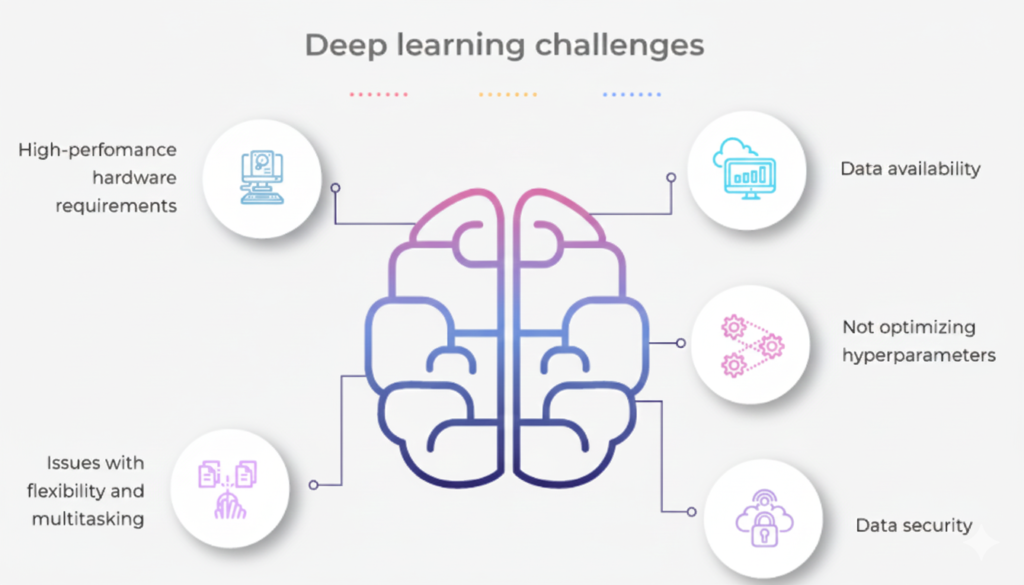

What Are the Main Challenges in Machine Learning?

You face several critical obstacles when implementing machine learning systems. Poor data quality tops the list. Models trained on incomplete or biased data produce unreliable results. Privacy and data governance risks like data leaks are the leading AI concerns across the globe, selected by 42% of North American organizations and 56% of European ones.

Organizations report deployment difficulties at alarming rates. Gartner reports that only about 15% of trained ML models reach production due to deployment hurdles. Technical complexity, resource constraints, and skill shortages compound these problems.

The challenges extend beyond technical issues. Ethical concerns about fairness and transparency demand attention. Regulatory compliance adds another layer of complexity. Each barrier requires careful planning and strategic solutions to overcome.

How Does Data Quality Impact Machine Learning Success?

Your model’s performance depends entirely on the data you feed it. Garbage in means garbage out. Incomplete records, inconsistent formatting, and missing values sabotage even the most sophisticated algorithms. Real-world data rarely arrives clean and organized.

Healthcare systems face this challenge constantly. Patient records contain gaps, errors, and inconsistencies. A model trained on such data might misdiagnose conditions or overlook critical patterns. Financial institutions deal with similar problems when transaction data lacks standardization.

Nearly 70–80% of ML project time goes into data collection and cleaning rather than model development. Data scientists spend countless hours preprocessing instead of building innovative solutions. Organizations must establish robust data governance frameworks to address quality issues at their source.

Solutions include automated data validation, standardized collection processes, and continuous monitoring. Synthetic data generation helps fill gaps when real data proves insufficient. Investing in data infrastructure pays dividends throughout the model lifecycle.

Why Do Imbalanced Datasets Create Problems?

Your dataset might contain 99% of one class and only 1% of another. This imbalance causes models to ignore minority classes completely. The algorithm learns to predict the majority class every time because it maximizes accuracy metrics. Real-world consequences can be severe.

Fraud detection systems exemplify this challenge. In financial systems, fraudulent transactions make up less than 1% of total data, yet missing them can cause huge losses. A model that labels everything as legitimate achieves high accuracy but provides zero value.

Medical diagnosis faces similar issues. Rare diseases appear infrequently in training data. Cancer detection models might classify every scan as healthy simply because cancer cases represent a small fraction of examples. Patients suffer when algorithms overlook critical conditions.

Techniques like oversampling minority classes, undersampling majority classes, and synthetic minority oversampling help restore balance. Cost-sensitive learning assigns higher penalties for misclassifying rare cases. Anomaly detection approaches treat minority classes as outliers requiring special attention.

What Causes Overfitting and Underfitting in Models?

Overfitting occurs when your model memorizes training data instead of learning general patterns. The algorithm performs brilliantly on familiar examples but fails spectacularly on new data. Underfitting represents the opposite problem. Your model remains too simple to capture important relationships.

About 40% of production models underperform due to poor generalization caused by overfitting or underfitting. Organizations waste resources building systems that cannot handle real-world scenarios. Stock prediction models demonstrate this clearly. They might replay historical patterns perfectly yet crash when market conditions shift.

Regularization techniques combat overfitting by penalizing overly complex models. Cross-validation tests performance on unseen data during training. Dropout randomly disables neurons during neural network training, forcing the model to learn robust features rather than memorizing examples.

Finding the sweet spot requires experimentation. You adjust model complexity, add or remove features, and tune hyperparameters. Ensemble methods combine multiple models to balance individual weaknesses. The goal remains consistent: build systems that generalize well beyond training data.

How Do You Address Model Interpretability Issues?

Your neural network makes decisions, but you cannot explain why. Stakeholders demand transparency. Regulators require justifications. Customers deserve explanations. Yet deep learning models operate as black boxes, transforming inputs into outputs through millions of parameters.

Regulatory frameworks like GDPR require explainable AI in high-risk sectors such as banking and healthcare. A credit scoring system that denies loans without explanation invites lawsuits and regulatory penalties. Healthcare providers must justify treatment recommendations to patients and insurance companies.

Explainable AI techniques help solve this problem. SHAP values quantify each feature’s contribution to predictions. LIME approximates complex models with simpler, interpretable ones locally. Attention mechanisms in neural networks highlight which inputs influenced specific decisions.

Trade-offs exist between accuracy and interpretability. Simple models like decision trees offer clear logic but limited power. Complex deep learning systems achieve superior performance at the cost of transparency. You must choose based on your specific requirements and constraints.

What Deployment Challenges Do Organizations Face?

Training a model represents only half the battle. Deploying it into production systems introduces entirely new challenges. APIs must handle concurrent requests. Databases need optimization. Real-time systems demand low latency. Integration with existing infrastructure often proves painful.

Version control becomes critical. You must track which model version serves which users. Rollback capabilities protect against failures. Monitoring systems detect performance degradation. A/B testing compares new models against existing ones safely.

Scalability concerns emerge under production loads. Your model might work perfectly on small test sets but crash when processing millions of daily requests. Cloud infrastructure, containerization, and load balancing address scaling issues. Batch processing handles non-urgent predictions efficiently.

Security vulnerabilities require attention. Models need protection against adversarial attacks. Data pipelines must maintain privacy. Access controls prevent unauthorized usage. Each deployment component demands security considerations.

Why Does Model Drift Degrade Performance Over Time?

Your model performs excellently at launch. Months later, accuracy plummets. The world changed, but your model did not. In a survey by Evidently AI, more than 60% of ML teams reported model performance degradation within six months of deployment.

Customer behavior evolves. Market conditions shift. New products appear. Seasonal patterns emerge. The data distribution that informed training no longer matches reality. Models cannot adapt without intervention.

Continuous monitoring detects drift before it causes serious damage. You track prediction distributions, feature statistics, and accuracy metrics. Automated retraining schedules keep models current. A/B testing compares new versions against deployed ones.

Some models require frequent updates. Recommendation systems must adapt to trending content. Fraud detection algorithms must recognize new attack patterns. Other models remain stable for extended periods. You must determine appropriate update frequencies based on your domain.

How Do Privacy Concerns Affect Machine Learning Projects?

Your models need data to learn. That data often contains sensitive personal information. Regulatory frameworks like GDPR and HIPAA impose strict requirements. Cybersecurity and data privacy are currently US executives’ top concerns when it comes to implementing generative AI, at 81% and 78% respectively.

Healthcare organizations handle patient records. Financial institutions process transaction histories. Retailers track shopping behaviors. Each data point reveals information about individuals. Breaches expose millions to identity theft and fraud.

Privacy-preserving techniques offer solutions. Differential privacy adds noise to datasets, protecting individual records while maintaining statistical properties. Federated learning trains models across distributed data without centralizing sensitive information. Homomorphic encryption enables computations on encrypted data.

IBM’s 2022 Cost of Data Breach Report estimated the average breach cost at $4.35 million, affecting ML workflows that store or share personal data. Prevention costs less than remediation. Implementing proper security measures from the start protects both organizations and users.

What Role Does Bias Play in Machine Learning Failures?

Your training data reflects historical patterns. Those patterns often encode societal biases. Models learn and amplify these biases, producing discriminatory outcomes. MIT research found that facial recognition systems misidentified darker-skinned women up to 34% of the time, versus less than 1% for lighter-skinned men.

Hiring algorithms trained on past decisions might discriminate against women or minorities. Loan approval systems could deny qualified applicants based on zip codes correlated with race. Criminal justice risk assessments might unfairly target certain demographic groups. Each instance causes real harm.

Bias detection requires careful analysis. You examine training data for imbalances. Feature importance reveals which attributes drive decisions. Fairness metrics quantify disparate impacts across groups. Regular audits catch problems before they affect users.

Mitigation strategies include rebalancing training data, removing problematic features, and applying fairness constraints during training. Post-processing adjustments correct biased predictions. Diverse development teams catch issues that homogeneous groups might miss. Continuous monitoring ensures fairness persists over time.

How Does Lack of Domain Expertise Hinder Projects?

Data scientists understand algorithms. Domain experts understand business problems. Successful projects require both. A McKinsey report showed ML projects involving domain experts had 30–40% higher success rates.

A financial model built without economic insight might overlook market cycles. Healthcare algorithms developed without medical knowledge could misinterpret symptoms. Manufacturing systems designed without industrial expertise might optimize the wrong metrics. Technical brilliance alone proves insufficient.

Cross-functional teams bridge this gap. Data scientists collaborate with business analysts, subject matter experts, and end users. Regular communication ensures technical solutions address actual needs. Domain experts validate model outputs and identify edge cases.

Knowledge transfer works both ways. Data scientists learn business context. Domain experts gain technical understanding. This shared knowledge improves feature engineering, model selection, and result interpretation. Organizations that invest in building these bridges see better outcomes.

What Skill Gaps Prevent Machine Learning Adoption?

72% of IT leaders mention AI skills as one of the crucial gaps that needs to be addressed urgently. Demand for machine learning expertise far exceeds supply. Organizations struggle to find qualified professionals. Existing talent commands premium salaries.

Technology evolves rapidly. Surveys show that over 50% of ML professionals feel their skills become outdated within 12–18 months. New frameworks appear constantly. Research papers introduce novel techniques weekly. Keeping current requires continuous learning.

Several strategies address skill shortages. Internal training programs upskill existing employees. Partnerships with universities create talent pipelines. Consultants provide temporary expertise. Low-code and no-code platforms democratize access to machine learning capabilities.

Open-source tools and pre-trained models lower entry barriers. Transfer learning allows teams to adapt existing models rather than building from scratch. Cloud platforms provide managed services that handle infrastructure complexity. These resources make machine learning accessible to organizations without extensive expertise.

What Computational Resources Do Models Require?

Training sophisticated models demands significant computational power. Deep learning networks require expensive GPUs or specialized hardware. Cloud infrastructure costs accumulate quickly. Small organizations find these expenses prohibitive.

A single training run might consume weeks of compute time. Hyperparameter tuning multiplies this cost by exploring multiple configurations. Large language models require millions of dollars in computing resources. Research organizations and startups struggle to compete with tech giants.

Solutions exist for resource-constrained scenarios. Transfer learning leverages pre-trained models, reducing training requirements. Model compression techniques like pruning and quantization decrease computational needs. Efficient architectures achieve comparable performance with fewer parameters.

Cloud providers offer pay-as-you-go pricing. Spot instances reduce costs for interruptible workloads. Open-source frameworks optimize performance. Hardware advances make specialized processors more affordable. Organizations must balance performance requirements against budget constraints.

How Do Security Vulnerabilities Threaten Machine Learning Systems?

Adversaries can manipulate your models through carefully crafted inputs. Autonomous vehicles might misclassify stop signs with strategically placed stickers. Spam filters fail when attackers add specific phrases. These adversarial examples expose fundamental vulnerabilities.

Model stealing attacks extract proprietary algorithms through repeated queries. Poisoning attacks corrupt training data to introduce backdoors. Privacy attacks reconstruct training examples from model parameters. Each vulnerability requires specific defenses.

Adversarial training exposes models to attack examples during training, improving robustness. Input validation detects suspicious patterns before processing. Defensive distillation makes models less sensitive to small perturbations. Regular security audits identify potential weaknesses.

Data encryption protects information at rest and in transit. Access controls limit who can interact with models. Monitoring systems detect unusual query patterns. Defense-in-depth strategies apply multiple layers of protection. Security must be considered throughout the development lifecycle.

What Solutions Address These Challenges?

Organizations that succeed in machine learning adopt comprehensive strategies. They invest in data infrastructure to ensure quality. They establish governance frameworks to manage privacy and compliance. They build diverse teams combining technical and domain expertise.

Continuous monitoring catches problems early. Automated retraining keeps models current. Rigorous testing validates performance before deployment. Documentation enables maintenance and troubleshooting. Version control tracks changes over time.

Education programs upskill existing employees. Partnerships with academic institutions create talent pipelines. Collaboration with specialized consultants accelerates projects. Cloud platforms reduce infrastructure complexity. Open-source tools lower costs and increase transparency.

Ethical guidelines ensure fairness and accountability. Bias detection tools identify problematic patterns. Explainability techniques build stakeholder trust. Privacy-preserving methods protect sensitive data. Security measures defend against attacks.

Success requires balancing competing priorities. Accuracy must be weighed against interpretability. Privacy needs must be reconciled with data requirements. Speed demands conflict with thoroughness. Organizations that navigate these trade-offs effectively unlock machine learning’s transformative potential.

What Future Trends Will Shape Machine Learning?

The machine learning landscape continues to evolve rapidly. Organizations must prepare for emerging trends that will redefine implementation approaches. The global machine learning market will reach $503.40 billion by 2030, driven by technological breakthroughs and expanding applications.

Agentic AI systems represent the next frontier. These systems complete entire tasks autonomously rather than simply responding to queries. Companies like Google and Salesforce test agents that schedule meetings, analyze reports, and trigger actions based on data. Unlike traditional automation, these agents make real-time decisions using ML models instead of fixed rules.

Small language models gain traction for specialized applications. Organizations realize that massive models often prove unnecessary for specific tasks. Smaller models cost less to run, deploy faster, and maintain easier. The trend toward specialized solutions delivers superior results compared to generic approaches.

Federated learning addresses privacy concerns while enabling collaboration. Organizations train models across distributed data without centralizing sensitive information. Healthcare providers can improve diagnostics without sharing patient records. Financial institutions detect fraud while keeping transaction data secure. Privacy and performance no longer exist as opposing goals.

Explainable AI becomes mandatory rather than optional. Regulators demand transparency. Stakeholders require justifications. Customers deserve explanations. Techniques that reveal model reasoning gain adoption across industries. Organizations that prioritize interpretability build trust and ensure compliance.

Edge computing brings machine learning closer to data sources. Processing happens on devices rather than distant servers. Response times drop dramatically. Privacy improves through localized computation. Applications range from autonomous vehicles to smart manufacturing systems. The shift toward edge deployment accelerates as hardware capabilities improve.

Quantum machine learning emerges from research labs into practical applications. Quantum computing solves problems beyond classical algorithms’ reach. Early adopters in finance and pharmaceuticals explore quantum approaches for optimization and drug discovery. The technology remains experimental but shows promising potential.

Multimodal learning systems process diverse input types simultaneously. Models understand text, images, audio, and video together rather than separately. Applications span education, transportation, and content generation. The ability to connect different data types creates more intelligent and context-aware systems.

How Do You Implement Machine Learning Successfully?

Success requires strategic planning and disciplined execution. Organizations must follow proven best practices to maximize returns on machine learning investments. Research shows that ML projects involving domain experts had 30–40% higher success rates. Clear methodology separates successful implementations from failed experiments.

Start with business objectives rather than technical capabilities. Define what success looks like before building anything. Identify specific problems that machine learning can solve. Establish measurable metrics that align with business goals. Customer churn reduction, fraud detection accuracy, or operational efficiency gains provide concrete targets.

Ensure data readiness before model development. Data quality determines model performance. Invest in data infrastructure first. Establish governance frameworks. Implement validation processes. Clean data saves time during model training. Organizations that prioritize data preparation avoid costly rework later.

Build cross-functional teams that combine diverse expertise. Data scientists understand algorithms. Domain experts understand business problems. Software engineers handle infrastructure. DevOps specialists manage deployment. Collaboration between these roles produces solutions that address real needs.

Begin with simple models and iterate toward complexity. Early wins build momentum. Quick prototypes reveal problems before significant investments. Start small, validate assumptions, then scale. Incremental progress beats attempting perfect solutions immediately.

Implement robust testing at multiple levels. Unit tests verify individual components. Integration tests validate connections between parts. End-to-end tests confirm complete workflows. Automated testing maintains quality as systems evolve. Tests catch problems before they reach production.

Establish continuous monitoring from day one. Track performance metrics. Detect drift early. Monitor prediction distributions. Log unusual patterns. Automated alerts notify teams of degradation. Regular reviews ensure models remain effective over time.

Document everything thoroughly. Future maintainers need context. Decisions should be traceable. Experiments require recording. Documentation enables knowledge transfer and troubleshooting. Teams that document well maintain systems more easily.

Choose technology stacks that support long-term goals. Cloud platforms offer scalability and flexibility. Containerization enables consistent deployment. Version control tracks changes. MLOps tools automate workflows. Technology should enable iteration rather than constrain it.

Plan for model retraining from the beginning. Data distributions change. Models require updates. Establish retraining schedules. Build automated pipelines. Fresh models maintain accuracy as conditions evolve. Proactive updates prevent gradual degradation.

Measure business impact rather than just technical metrics. Accuracy matters, but business results matter more. Track customer satisfaction, revenue impact, cost savings, or efficiency gains. Real-world outcomes justify investments and guide priorities.

Key Takeaways

Machine learning offers tremendous value but presents significant challenges. Data quality issues consume most project time. Imbalanced datasets cause models to ignore critical cases. Overfitting and underfitting prevent generalization. Deployment complexities hinder production success.

Model drift degrades performance over time. Privacy concerns require careful handling. Bias produces discriminatory outcomes. Domain expertise proves essential for success. Skill gaps limit adoption. Computational costs strain budgets. Security vulnerabilities expose systems to attacks.

Solutions exist for each challenge. Robust data governance ensures quality. Advanced techniques address imbalanced datasets. Regularization and cross-validation improve generalization. MLOps practices streamline deployment. Continuous monitoring detects drift. Privacy-preserving methods protect data. Bias detection and mitigation promote fairness. Cross-functional teams bridge expertise gaps. Cloud platforms reduce resource requirements. Security measures defend against threats.

Future trends reshape the landscape. Agentic AI completes tasks autonomously. Small specialized models outperform generic ones. Federated learning balances privacy with collaboration. Explainable AI builds trust and ensures compliance. Edge computing improves speed and privacy. Quantum computing tackles previously impossible problems. Multimodal systems understand diverse data types together.

Successful implementation follows proven practices. Start with clear business objectives. Prioritize data quality. Build cross-functional teams. Begin simple and iterate. Test thoroughly. Monitor continuously. Document comprehensively. Choose flexible technology. Plan for retraining. Measure business impact.

Organizations that understand these challenges and implement appropriate solutions position themselves for success. Machine learning transforms industries, but only for those who navigate its complexities effectively. Your preparation today determines your competitive advantage tomorrow. The market grows rapidly, but success belongs to those who address challenges systematically while embracing emerging opportunities

Frequently Asked Questions

- Q: What is the biggest challenge in machine learning?

- The Reality: Data quality. Roughly 70–80% of an ML engineer’s time is spent cleaning and labeling data, not building models

- The Reality: Data quality. Roughly 70–80% of an ML engineer’s time is spent cleaning and labeling data, not building models

- Q: Why do machine learning models fail in production?

- The Reality: A staggering 85% of projects never leave the lab. Success requires a focus on deployment infrastructure (MLOps), not just accuracy

- The Reality: A staggering 85% of projects never leave the lab. Success requires a focus on deployment infrastructure (MLOps), not just accuracy

- Q: How do you prevent model drift?

- The Reality: 60% of models degrade within 6 months. The Fix: Implement automated monitoring to retrain models the moment performance dips

- The Reality: 60% of models degrade within 6 months. The Fix: Implement automated monitoring to retrain models the moment performance dips

- Q: How much does implementation actually cost?

- The Reality: Beyond development, the average cost of a data breach in AI-heavy firms is $4.35M. Security and privacy aren’t optional; they are a cost-saving measure