6 Powerful Data Stream Model Types You Must Know Today

In today’s digitally driven ecosystem, data no longer waits patiently in databases to be analyzed later. Instead, it flows continuously from countless sources applications, sensors, users, and machines demanding immediate attention. This constant flow has fundamentally reshaped how organizations process information. At the center of this transformation lies the Data Stream Model, a foundational concept that enables real-time insights, responsiveness, and scalability.

As businesses move toward instant decision making, understanding how streaming data works and how it can be modeled efficiently has become a critical competency. This article explores the Data Stream Model in depth, covering its theoretical foundations, processing algorithms, architectural patterns, and real-world applications.

The Shift to Real-Time: Why Streaming Data Matters

Modern systems operate in environments where milliseconds can determine success or failure. Financial platforms detect fraud as transactions occur, e-commerce platforms personalize recommendations instantly, and monitoring systems trigger alerts before outages escalate. These capabilities rely on real-time processing rather than delayed batch analysis.

The rise of streaming data reflects a broader shift from reactive analytics to proactive intelligence. The Data Stream Model provides the conceptual framework required to analyze information as it arrives, making it possible to respond to events the moment they happen.

Defining Streaming Data: Continuous Flow, Unbounded Nature

Streaming data is characterized by its continuous, unbounded, and time-ordered nature. Unlike traditional datasets, streaming data does not have a defined beginning or end. Events keep arriving indefinitely, often at high velocity and in varying formats.

This unbounded flow introduces complexity. Systems cannot simply store everything and analyze it later. Instead, they must process data incrementally, often in a single pass. The Data Stream Model addresses these constraints by emphasizing efficiency, approximation, and temporal awareness.

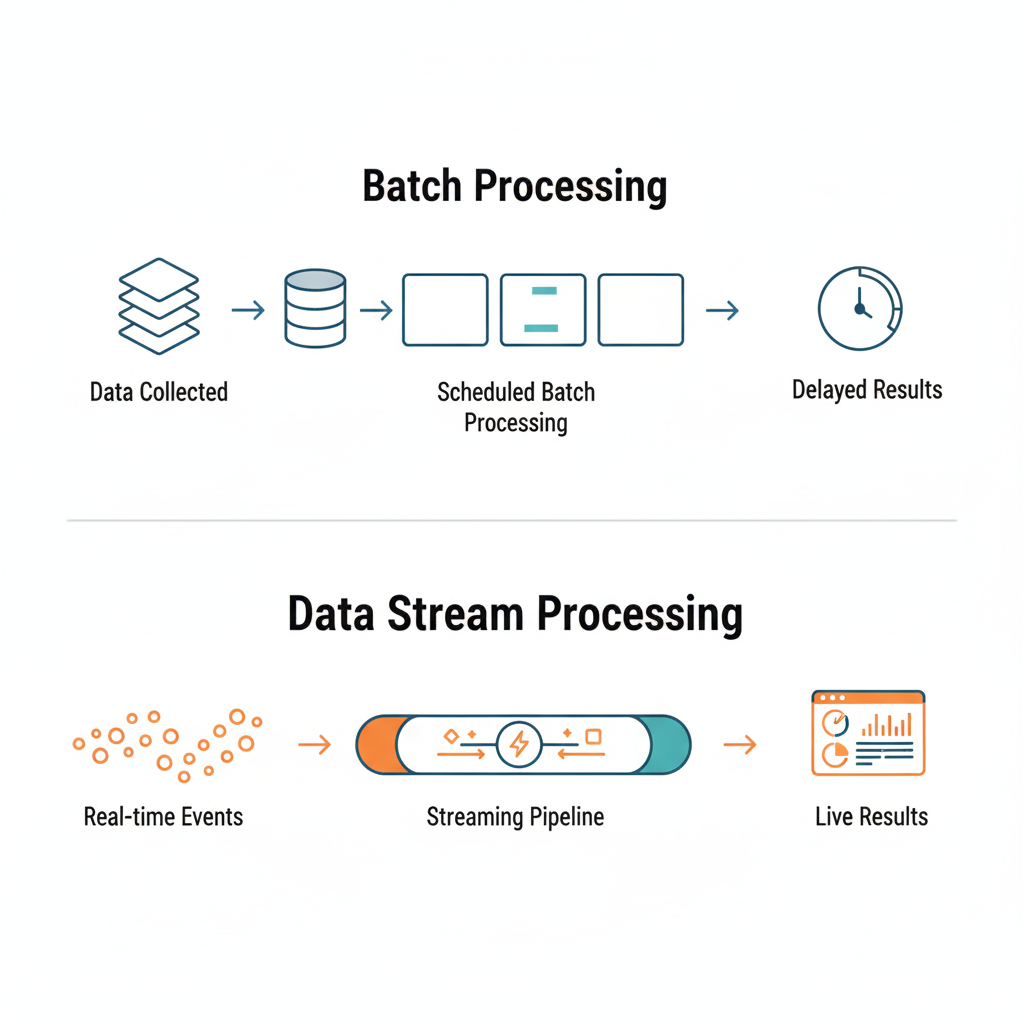

Distinguishing from Batch Processing: Data-in-Motion vs. Data-at-Rest

Batch processing operates on static datasets, data at rest typically stored in databases or data warehouses. While effective for historical analysis, batch systems struggle with latency and responsiveness.

In contrast, streaming systems deal with data in motion. The Data Stream Model is purpose-built for this paradigm, prioritizing low latency, continuous computation, and adaptability. Rather than replacing batch processing, streaming complements it, enabling organizations to balance immediacy with depth.

Our Focus: Beyond the Basics to the Foundational Data Stream Model

This article looks deeper than basic explanations. It explores the key principles that shape streaming systems. Understanding the Data Stream Model helps you design, evaluate, and optimize real-time data solutions across different fields.

Understanding the Data Stream Model: Theoretical Foundations

What is the Data Stream Model?

The Data Stream Model is a way to process large, ongoing data flows. It uses little memory and requires few passes over the data. It assumes that data arrives sequentially and must be processed on the fly, often without the ability to revisit past events.

This model works well for high-volume streams. It’s useful when storing all data isn’t practical or possible. It often uses probabilistic and approximate methods instead of precise calculations. This helps provide timely insights.

Core Characteristics of Data Streams

Several defining characteristics shape how the Data Stream Model operates:

- Unbounded input: Data arrives continuously without a known endpoint

- High velocity: Events may occur at extremely high rates

- Single-pass processing: Data is typically processed once

- Limited memory: Systems cannot store the entire stream

- Time sensitivity: The value of data often decreases over time

These constraints require algorithms and architectures specifically designed for streaming environments.

Fundamental Models for Stream Processing

Within the broader Data Stream Model, several sub-models define how updates and events are interpreted. Each has implications for processing logic and system design.

The Turnstile Model: Analyzing Net Change Over Time

The turnstile model allows both positive and negative updates to data elements. For example, inventory levels may increase or decrease as items are stocked or sold. This model is useful when tracking net changes rather than absolute totals.

However, handling negative updates adds complexity, particularly when using approximate algorithms. Systems must carefully manage state to ensure accuracy within acceptable bounds.

The Cash Register Model: Aggregating Total Counts and Values

In the cash register model, updates are strictly additive. Values only increase over time, such as counting page views or total sales. This simplicity helps in designing efficient algorithms. It’s commonly used in analytics and monitoring.

The Sliding Window Model: Processing Data in Dynamic Segments

The sliding window model focuses on recent data rather than the entire stream. Systems can analyze trends and detect anomalies by defining windows based on time or event counts. This helps them stay relevant.

Fixed (Tumbling) Windows

Tumbling windows divide the stream into non-overlapping segments. Each event belongs to exactly one window, making aggregation straightforward and predictable.

Hopping (Sliding) Windows

Sliding windows overlap, allowing events to contribute to multiple windows. This approach provides smoother trend analysis but requires more computation.

Session Windows

Session windows group events based on periods of activity separated by inactivity gaps. They are particularly useful for user behavior analysis and interaction tracking.

The Implications of Different Models for Processing Logic

Each stream model influences how data is stored, aggregated, and interpreted. Selecting the appropriate model depends on business requirements, latency tolerance, and computational constraints. Understanding these implications is essential for designing effective streaming systems.

Core Streaming Algorithms: Extracting Insights with Limited Resources

The Challenge of Single-Pass Processing and Memory Constraints

Streaming algorithms must operate under strict limitations. Traditional exact methods can be impractical with only one data pass and limited memory. The Data Stream Model embraces approximation as a practical solution.

Estimating Frequency and Heavy Hitters

Identifying frequently occurring elements—often called heavy hitters—is a common streaming task. Approximate algorithms provide efficient solutions without storing every event.

Count-Min Sketch: Approximating Frequencies in Data Streams

Count-Min Sketch uses hash functions and counters to estimate frequencies. It might overestimate counts, but it ensures bounded error. Plus, it uses little memory, so it’s great for high-volume streams.

Misra–Gries Algorithm: Identifying Frequent Elements

The Misra-Gries algorithm tracks a few candidate elements and their approximate counts. It is effective for finding elements that exceed a defined frequency threshold.

Counting Distinct Elements

Estimating unique elements in a stream is a common challenge. This is especially true in analytics and monitoring.

Bloom Filters: Probabilistic Membership Testing in Streams

Bloom filters efficiently test whether an element has likely appeared before. False positives can happen, but false negatives can’t. This makes them useful for membership checks.

K-minimum Value (KMV) Algorithm: Estimating Distinct Values

KMV algorithms estimate distinct counts by tracking the smallest hash values observed. They balance accuracy and memory usage effectively.

FM-Sketch Algorithm: Another Approach for Distinct Count Estimation

FM-Sketch relies on probabilistic bit patterns to estimate cardinality. It is particularly useful when memory constraints are severe.

Advanced Stream Processing Tasks

Beyond basic counting, streaming systems support complex analytics.

Event Detection and Pattern Recognition (detection patterns, query system)

Analyzing event sequences and patterns helps systems find anomalies. They can then trigger alerts and support rule-based queries in real time.

Sampling and Summarization Techniques

Sampling reduces data volume while preserving statistical properties. Summarization techniques create compact representations that enable efficient downstream analysis.

Streaming Data Architectures: Building Scalable and Fault-Tolerant Pipelines

The Foundational Layers of a Streaming Architecture

A strong streaming architecture has three main parts: ingestion, processing, and output layers. Each part is designed for reliability and scalability.

Data Ingestion Layer: Capturing Diverse Data Sources

The ingestion layer collects events from multiple producers, ensuring durability and order.

Stream Producers:

- IoT devices (sensor data)

- Web clickstreams

- Financial transactions

- Application logs

- Change data capture (CDC)

Modern pipelines gather data from various sources. This includes sensors, user interactions, transactional systems, logs, and database changes. This mix shows the wide range of streaming use cases.

Message Brokers (Streaming Platforms)

Apache Kafka, Amazon Kinesis Data Streams, Azure Event Hubs, Google Pub/Sub, and RabbitMQ are all intermediaries. They buffer, distribute, and manage streams reliably.

Processing Layer: Real-time Transformation and Analysis

This layer applies business logic, aggregations, and analytics in real time.

Data Preprocessing and Validation within the Stream

Cleaning, validating, and enriching data at ingestion time ensures downstream accuracy and consistency.

Data Output Layer: Delivering Insights and Persistence

Processed data must go to systems for visualization, storage, and analytics.

Data Sinks

Real-time dashboards, like Grafana and Power BI, help with different consumption patterns. Data Lakes and Data Warehouses also play a key role. NoSQL databases and time-series databases, such as Apache Iceberg, also play a key role.

Downstream Applications and Machine Learning Models

Streaming outputs often feed machine-learning models, enabling real-time predictions and adaptive systems.

Key Architectural Considerations

Designing effective pipelines requires balancing multiple trade-offs.

Scalability and Elasticity: Handling High Volumes and Velocities

Systems must scale horizontally to handle traffic spikes without degradation.

Fault Tolerance and Data Loss Prevention: Ensuring Reliability

Replication, checkpoints, and replay mechanisms protect against failures.

Low Latency and High Throughput Design Principles

Efficient serialization, parallelism, and backpressure handling are essential.

State Management in Stream Processors

Maintaining and recovering state is critical for correctness in long-running jobs.

Security and Data Governance in Stream Pipelines

Encryption, access control, and compliance measures protect sensitive data.

Integration with Cloud Infrastructure (AWS Kinesis, Azure Stream Analytics)

Cloud-native services simplify the deployment, scaling, and maintenance of streaming systems.

Applications of the Data Stream Model: Real-World Impact and Innovation

Real-time Analytics and Business Intelligence

Organizations use streaming analytics to monitor KPIs, detect trends, and act immediately.

Web Analytics and User Behavior Tracking (Web Traffic, Web Clickstreams)

Streaming models enable real-time insights into user journeys and engagement patterns.

Operational Monitoring and Alerting (application logs, system metrics)

Teams can spot issues early by analyzing logs and metrics as they happen. This helps to keep the system healthy.

Conclusion

The Data Stream Model is the backbone of modern real-time systems. Continuous processing, approximation, and scalable architectures enable organizations to swiftly transform raw data into valuable insights. As data volumes and speed increase, understanding these concepts is essential. It’s no longer optional if you want to stay competitive in a real-time world

FAQ

What is a stream model?

A stream model is a computational framework designed to process data that arrives continuously over time. It assumes data is received in a sequence, often at high speed, and must be analyzed in real time using limited memory and minimal passes over the data

What is the concept of data streaming?

Data streaming refers to the continuous generation, transmission, and processing of data as it is created. Instead of storing data first and analyzing it later, streaming systems analyze data in motion, enabling real-time insights, faster decisions, and immediate responses to events

What is the data stream algorithm?

A data stream algorithm is an algorithm specifically designed to operate under the constraints of streaming data. These algorithms typically process data in a single pass, use limited memory, and often rely on approximation techniques to efficiently extract insights from large or unbounded data streams

What is an example of a data stream?

A common example of a data stream is web clickstream data, where user interactions such as page views and clicks are generated continuously. Other examples include sensor data from IoT devices, financial transaction streams, application logs, and real-time social media feeds

How do memory and time constraints affect algorithms in the data stream model?

Memory and time constraints significantly shape algorithms in the data stream model. Since storing all incoming data is impractical, algorithms must use compact data structures and approximation methods. Time constraints require fast, per-item processing, ensuring that each data element is handled immediately without delaying the stream